Why Wikipedia Can Reject AI and Still Stay Essential

Key Takeaways:

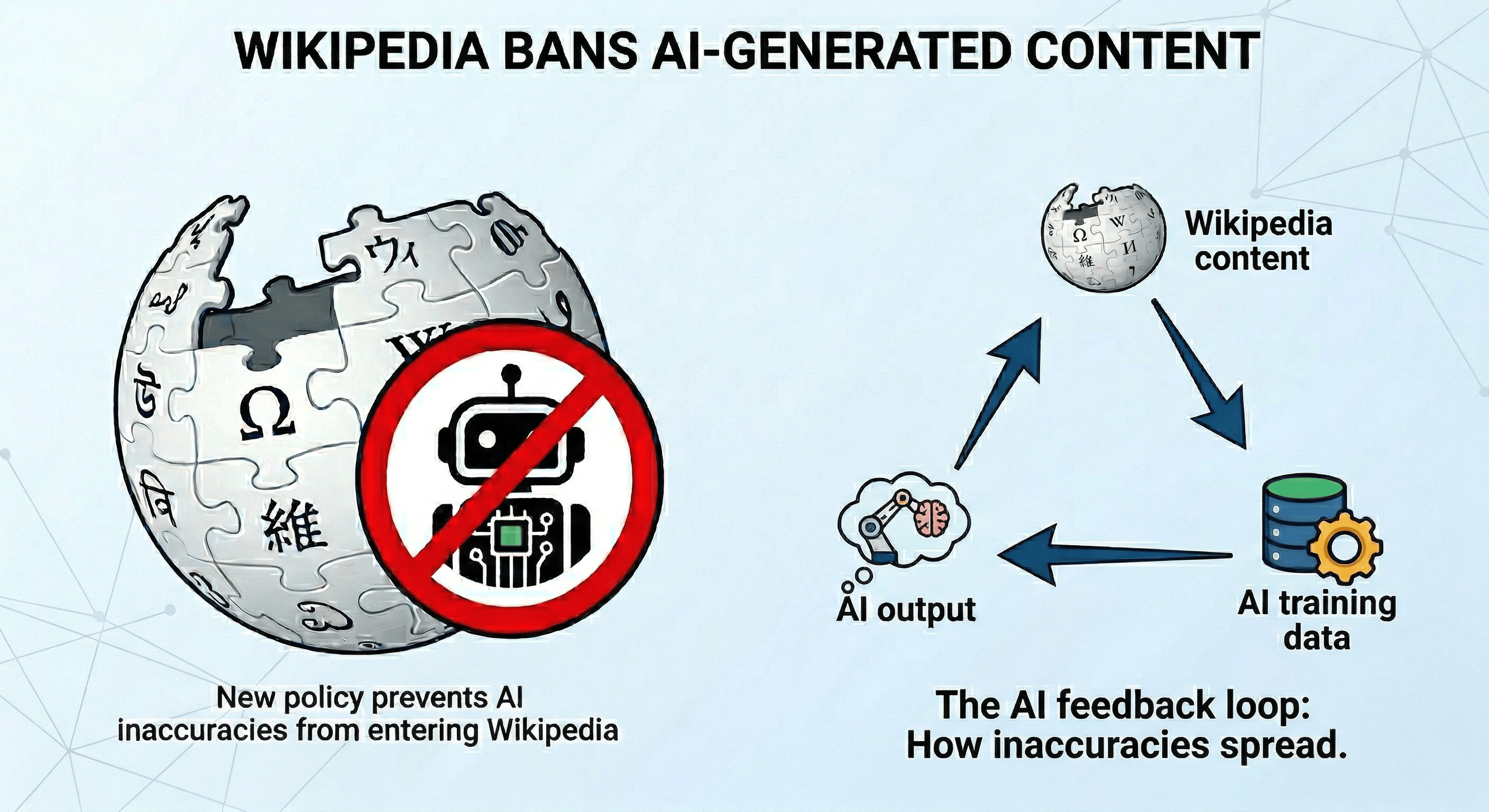

English Wikipedia editors voted 44-2 on March 20 to prohibit using LLMs to generate or rewrite article content

Two narrow exceptions: editors can use LLMs for basic copyediting of their own text (with human review) and for first-pass translations

The policy passed after months of debate and multiple failed earlier attempts to establish AI guidelines

A suspected AI agent named TomWikiAssist was caught authoring and editing multiple articles in early March, illustrating the scale of the problem

Wikipedia is among the most cited sources in AI Overviews, ChatGPT, and Perplexity. AI-generated errors on Wikipedia could enter training data and compound

Wikipedia is one of the most visited websites in the world. It is also one of the most cited sources in AI-generated search answers. And as of March 20, its editors have voted overwhelmingly to ban AI-written content from its articles.

The Request for Comment (RfC) passed with 44 votes in favor and 2 opposed. The new policy states: "Text generated by large language models (LLMs) often violates several of Wikipedia's core content policies. For this reason, the use of LLMs to generate or rewrite article content is prohibited."

TechCrunch, 404 Media, Engadget, and SiliconANGLE all covered the decision, which was reported widely on March 26.

Why Wikipedia's editors took this step

The problem was both philosophical and practical.

Wikipedia's core content policies require verifiability. Every claim must be attributable to a reliable published source. LLMs generate text without explicitly citing sources and tend to produce plausible-sounding information that may not be accurate. That conflicts directly with Wikipedia's requirement that content be checkable.

Wikipedia also prohibits original research. Articles must reflect existing published knowledge, not new synthesis. LLMs generate synthesis by design. They blend information from training data in ways that may not be supported by any single cited source.

The practical trigger was escalating enforcement burden.

Wikipedia administrator Chaotic Enby, who authored the final proposal, noted that "in recent months, more and more administrative reports centered on LLM-related issues, and editors were being overwhelmed."

In early March, a suspected AI agent named TomWikiAssist was caught authoring and editing multiple Wikipedia articles autonomously.

As one editor noted, "An AI agent can just run wild 24 hours per day. It can cause disruption at a scale that is much larger than what a human editor can achieve."

The two exceptions are narrow

Editors can use LLMs to suggest basic copyediting improvements to their own writing. They must review the output themselves and ensure the AI does not introduce new content.

The policy warns that "LLMs can go beyond what you ask of them and change the meaning of the text such that it is not supported by the sources cited."

Editors can also use LLMs for first-pass translations between Wikipedia language editions. They must be fluent enough in both languages to catch errors and verify accuracy against cited sources.

Both exceptions require human review. Neither allows LLM-generated text to enter articles without an editor verifying every change.

The AI loop problem this addresses

Wikipedia is a primary training data source for most large language models. It is also among the most frequently cited domains in Google AI Overviews, ChatGPT, and Perplexity answers.

If AI-generated text enters Wikipedia unchecked, it gets scrapped by AI companies, enters future training data, and reinforces errors in the next generation of AI models. This is a known feedback loop.

Inaccurate or hallucinated content on Wikipedia would not just misinform human readers. It would compound through the AI systems that depend on Wikipedia as a trusted source.

The ban is Wikipedia's attempt to protect its role as a foundation of reliable information. That role matters more now than it did before AI search because AI systems actively use Wikipedia to verify claims and build citations.

The enforcement challenge is real

Wikipedia acknowledges that AI detection tools are unreliable. The policy specifies that stylistic or linguistic characteristics alone do not justify sanctions. Some editors write in ways that resemble LLM output naturally.

Enforcement relies on human moderators reviewing edits against content policies and checking an editor's recent history. Pages with less active moderation communities may be more vulnerable to AI-generated text going undetected.

The policy covers only English Wikipedia. Each language edition operates independently. Spanish Wikipedia has its own LLM ban but without the copyediting and translation exceptions.

Why content marketers should pay attention

Wikipedia's decision reinforces a principle that is becoming more important across the entire content ecosystem: original, human-verified content carries authority that AI-generated text does not.

For marketers building content strategies around AI visibility, the same logic that drove Wikipedia's ban applies to citation authority everywhere. AI systems cite sources they trust. Trust is built through verifiability, named expertise, and editorial accountability.

Content that demonstrates those qualities will earn citations. Content that reads like it was generated by an LLM, whether on Wikipedia or anywhere else, increasingly will not.

Disclaimer:This article is AI-assisted content and may contain errors. The Wikipedia policy details are sourced from the English Wikipedia RfC closed March 20, 2026, and reported by TechCrunch, 404 Media, Engadget, SiliconANGLE, and Search Engine Journal between March 23-27, 2026. Wikipedia policies vary by language edition. Verify with original sources.