Perplexity Claims Top Accuracy for Deep Research, Open-Sources New DRACO Benchmark

Key Takeaways:

Perplexity's Deep Research now runs on Claude Opus 4.5 for Max and Pro users

The upgrade scored 79.5% on Google DeepMind's Deep Search QA leaderboard

New DRACO benchmark evaluates AI research tools across 10 real-world domains

DRACO is fully open-source, with all rubrics and evaluation prompts available on Hugging Face

Deep Research recorded the lowest latency (459.6 seconds) while achieving top accuracy scores

Perplexity wants to set the standard for how AI research tools get measured. And it's putting its own product at the top of that list.

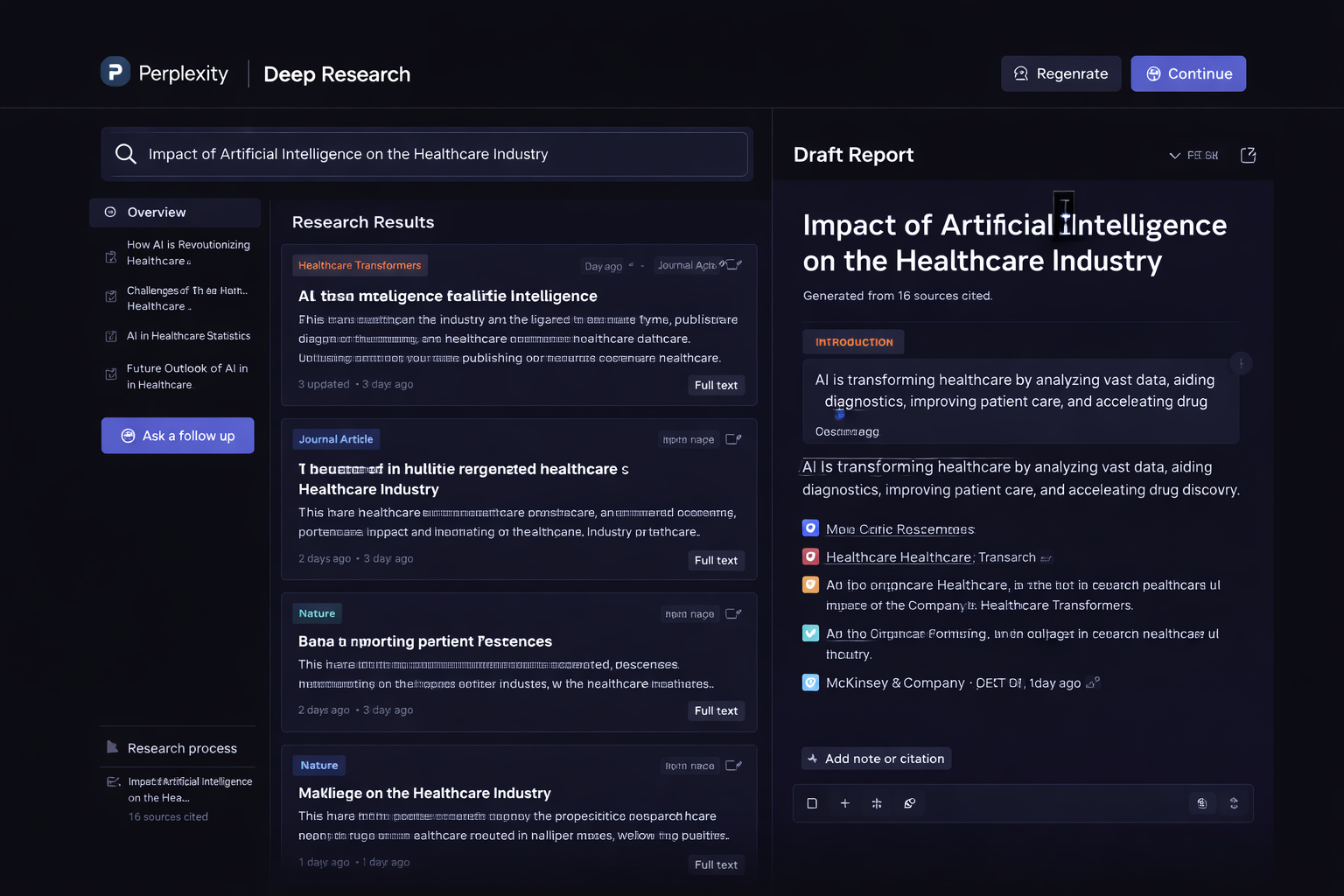

What changed in Deep Research

On February 4, the company announced an upgrade to its Deep Research feature. The tool now runs on Anthropic's Claude Opus 4.5 model, paired with Perplexity's proprietary search engine and sandbox infrastructure.

CEO Aravind Srinivas announced on X that the update "achieved state-of-the-art performance on external and internal benchmarks, beating every other deep research tool on accuracy, usability, and reliability across all verticals."

The upgrade is available immediately to Max subscribers ($200/month) and is rolling out to Pro users over the coming days.

How Perplexity measures "best"

Alongside the product update, Perplexity released DRACO, a new open-source benchmark for evaluating AI research agents.

DRACO stands for Deep Research Accuracy, Completeness, and Objectivity. Unlike traditional benchmarks that test isolated skills like trivia recall, DRACO focuses on real-world research tasks.

Here's what makes it different:

100 tasks across 10 domains: Academic, Finance, Law, Medicine, Technology, General Knowledge, UX Design, Personal Assistant, Shopping, and Needle in a Haystack

Each task includes roughly 40 expert-defined evaluation criteria

Tasks are derived from millions of actual production queries submitted to Perplexity

Evaluation uses an LLM-as-judge protocol, fact-checking responses against real data

The benchmark and all rubrics are available on Hugging Face. Perplexity says it wants wider adoption to raise the bar for research agents across the industry.

Performance claims and context

According to Perplexity, Deep Research topped the Google DeepMind Deep Search QA leaderboard with 79.5% accuracy. The company also claims the lowest average latency among competitors at 459.6 seconds.

In DRACO evaluations, Perplexity says it outperformed all competitors across every domain, with strongest results in Law, Medicine, and Academic research.

Co-founder and CSO Johnny Ho posted on X: "Proud of the Perplexity team and the deep infrastructural improvements that are powering our Deep Research product."

Worth noting: Perplexity created DRACO and is reporting its own performance on it. Independent verification from third parties would strengthen these claims.

What this means for marketers and researchers

Deep Research tools are becoming more relevant for content strategists, competitive analysts, and anyone who needs to synthesize information from multiple sources quickly.

A few practical considerations:

Max subscribers get higher usage limits and consistent Opus 4.5 access

Pro users will see the upgrade roll out gradually

The tool is designed for high-stakes research in finance, legal, and medical fields

Open-sourcing DRACO could push competitors to improve or publish their own benchmarks

For marketing teams doing competitor research, market analysis, or content development, tools like Deep Research can reduce hours of manual work. But accuracy claims should be tested against your specific use cases before relying on them for critical decisions.