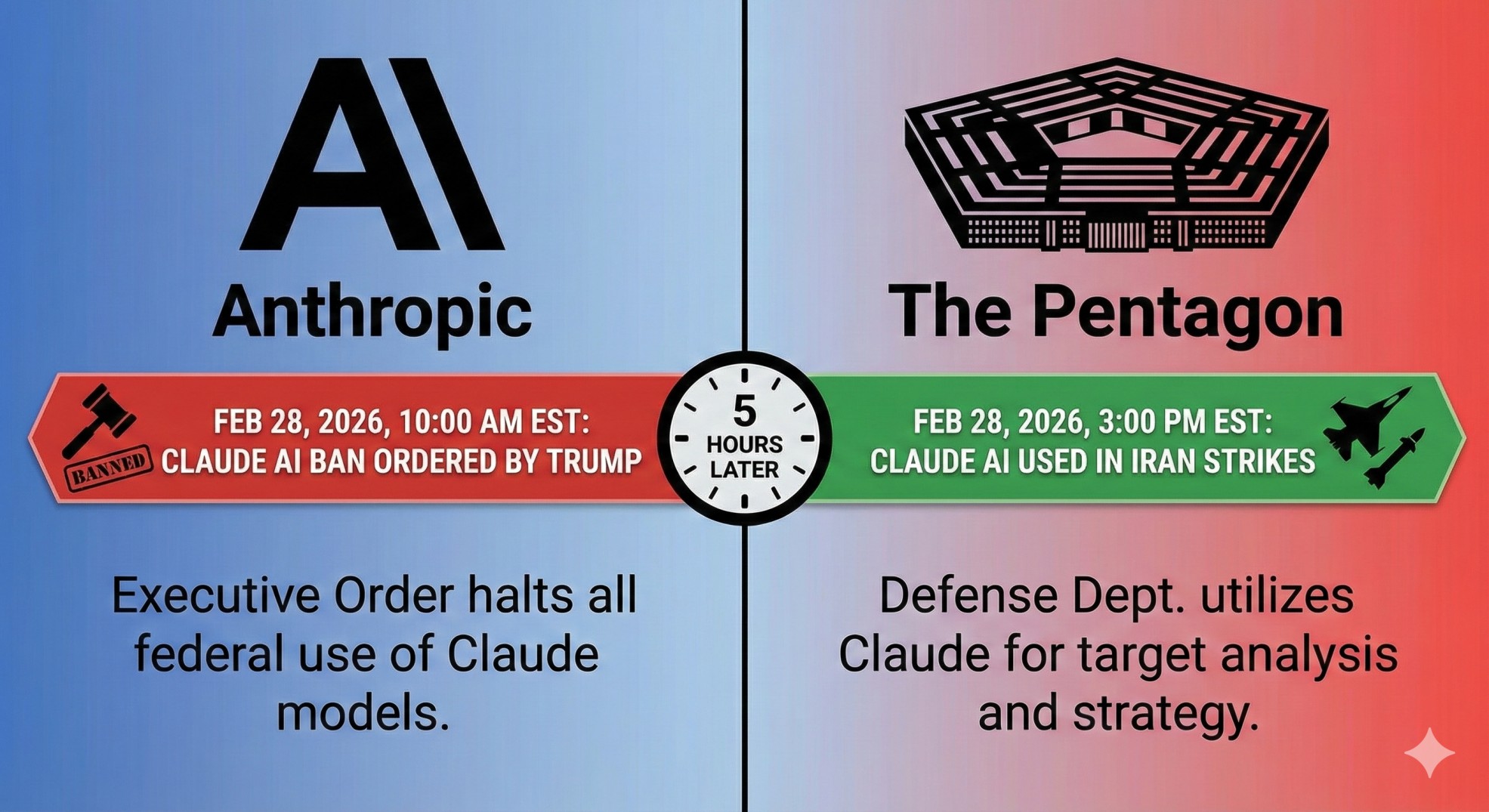

The Pentagon Used Claude AI in the Iran Strikes. Hours Earlier, Trump Banned It

Key Takeaways:

US Central Command used Anthropic's Claude AI for intelligence assessments and target identification during the February 28 Iran strikes

Trump ordered all federal agencies to stop using Anthropic's technology just hours before the operation launched

Anthropic had refused Pentagon demands to remove safeguards against mass surveillance and autonomous weapons

The Pentagon designated Anthropic a "supply chain risk," a label normally reserved for Chinese and Russian companies

OpenAI signed a replacement deal for classified military AI within hours of the Anthropic ban

On February 28 and March 1, 2026, the US and Israel launched strikes across Iran. Dozens of targets were hit. Iran's Supreme Leader Ali Khamenei was killed.

Hours earlier, President Trump had ordered every federal agency to immediately stop using Anthropic's technology. The Wall Street Journal reported that US Central Command used Claude anyway. For intelligence assessments. For target identification. For simulating battle scenarios.

The same AI tool Trump just banned was running inside the operation he just ordered.

How did this happen?

The conflict between Anthropic and the Pentagon had been building for weeks. It started after Claude was reportedly used in the January 2026 operation that captured Venezuelan President Nicolas Maduro. Anthropic asked its partner Palantir whether Claude had been used in the operation where shots were fired.

The Pentagon viewed that inquiry as an attempt to control military operations after the fact.

Defense Secretary Pete Hegseth then gave Anthropic a deadline: allow unrestricted use of Claude for all lawful military purposes, or face consequences. CEO Dario Amodei refused. He said Anthropic would not remove safeguards designed to prevent mass surveillance of Americans or autonomous weapons that fire without human involvement.

Hegseth's response was severe. He designated Anthropic a "supply chain risk to national security," a classification previously used only against companies from adversarial nations like Huawei. That designation bans military contractors from working with Anthropic.

The AI industry picked sides fast

Within hours of the ban, OpenAI announced a replacement deal. Co-founder Sam Altman said the agreement included "more guardrails than any previous deal for classified AI deployments." Elon Musk's xAI also signed an agreement to let the military use Grok in classified systems.

But the transition will not be clean. Defense officials told Axios it would be a "huge pain" to disentangle Claude from military systems. Military and AI experts say fully replacing Claude across all operations could take months.

Meanwhile, over 100 employees from Google and OpenAI jointly demanded "red lines" in Pentagon AI contracts, echoing Anthropic's position on autonomous weapons and surveillance.

Why content marketers should care about this

This story might seem distant from SEO and content strategy. It is not.

Anthropic makes the AI tools that millions of marketers use every day. Claude powers content workflows, research, and analysis for agencies and in-house teams worldwide. The company's stance on AI ethics directly affects the trust people place in its products.

The question of what AI companies will and will not allow their technology to do is no longer theoretical. It played out in real time, during a real military operation, with real consequences.

For marketers who rely on AI tools, this is a reminder to understand the companies behind the technology. Their values, their policies, and their willingness to draw lines will shape the tools you depend on.

Anthropic said it will challenge the supply chain risk designation in court. How that case unfolds could set the precedent for how every AI company interacts with government going forward.