600+ Google and OpenAI Employees Just Signed a Joint Letter Against Pentagon AI. That Has Never Happened Before

Key Takeaways:

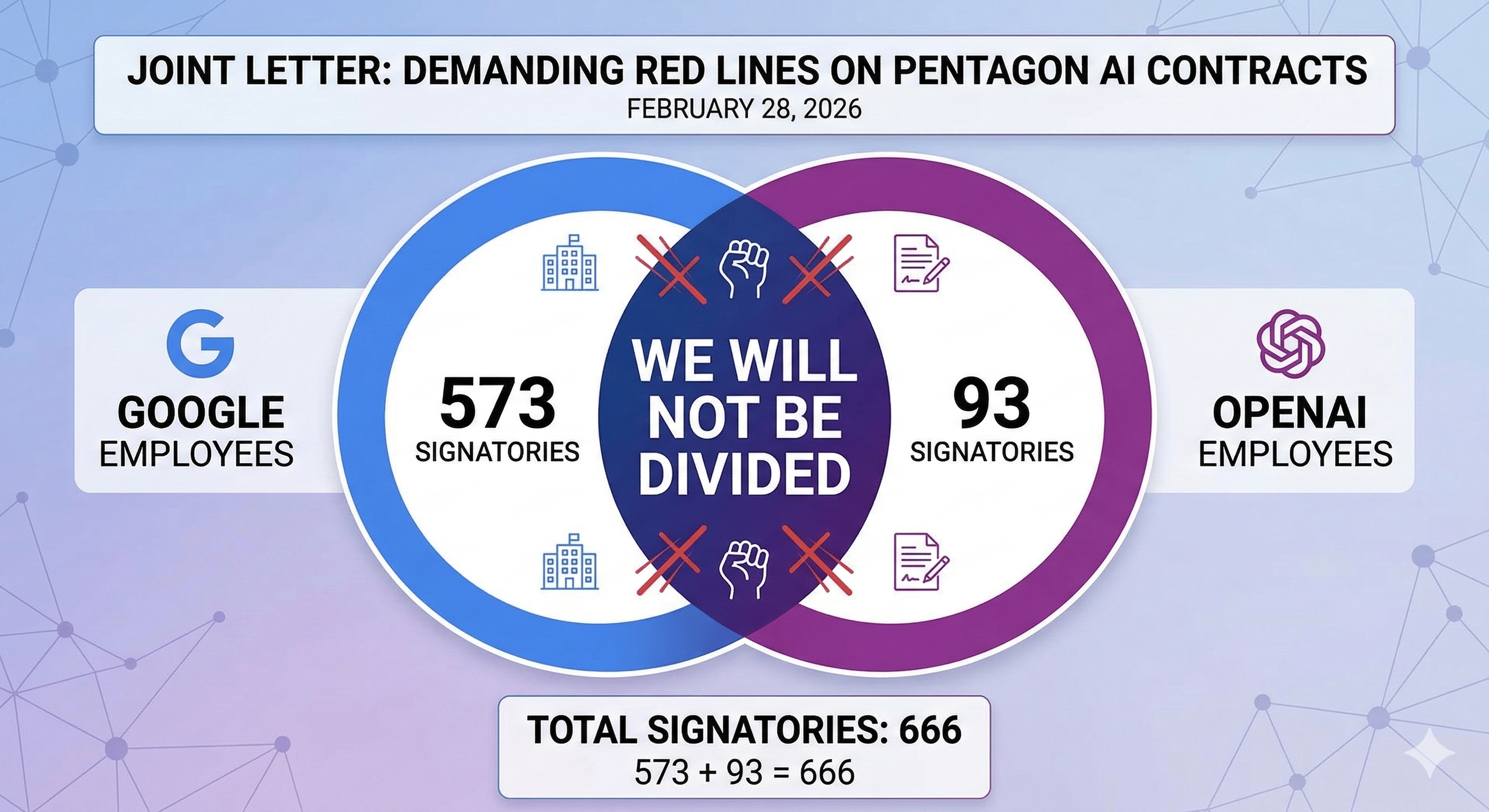

573 Google employees and 93 OpenAI employees signed an open letter titled "We Will Not Be Divided"

The letter demands their companies refuse Pentagon contracts enabling mass surveillance or autonomous weapons

Google's Chief Scientist Jeff Dean personally endorsed the letter

An OpenAI alignment researcher and a research scientist publicly criticized their own company's Pentagon deal

This is the first time employees from competing AI companies have organized together on military ethics

Rival AI companies do not organize together. Until last week, they never had.

On February 28, an open letter titled "We Will Not Be Divided" went live on notdivided.org. It carried 573 signatures from Google employees and 93 from OpenAI employees. The letter argued the US government was "trying to divide each company with fear that the other will give in."

It called on both companies to refuse Pentagon contract terms that could enable mass surveillance of Americans or autonomous weapons.

This happened in the middle of the Anthropic-Pentagon standoff. It happened the same day OpenAI signed its own military deal. And it carried an endorsement from someone who almost never takes public positions: Google's Chief Scientist Jeff Dean.

What the letter actually says

The notdivided.org letter is direct. It frames the current situation as a deliberate pressure tactic by the government, pitting AI companies against each other so that each fears being the one left behind.

The letter's core demand: all signatories call on their employers to set aside competitive differences and collectively refuse the Pentagon's current contract terms.

A separate group of Google DeepMind employees wrote directly to Jeff Dean with specific concerns about Google's Gemini for Government deal from August 2025. They worried it could be extended to cover mass surveillance or autonomous weapons without explicit restrictions.

Dean endorsed the broader letter publicly. When a Chief Scientist at one of the world's largest AI companies sides with a competitor's ethical position, it creates internal pressure that boards cannot easily ignore.

OpenAI's own people spoke out publicly

The internal criticism at OpenAI went beyond the letter.

Leo Gao, who works on AI alignment at OpenAI, criticized his employer on X. He pointed out that the company agreed to let the Pentagon use its tech for "any lawful purpose" and then added what he called "window dressing" to make it look more restricted.

Research scientist Aidan McLaughlin posted that he personally did not think the deal was worth it. That post drew nearly 500,000 views.

These are not anonymous leaks. Named employees at one of the most prominent AI companies in the world publicly questioned their own leadership's decisions. That kind of visible dissent inside a company this high-profile is rare.

This started before the Pentagon deal

The employee movement has been building for months. Earlier in February, nearly a thousand Google workers signed a separate letter urging the company to end contracts with DHS, ICE, and Customs and Border Protection. Google DeepMind staff had already raised objections to military AI use before the Anthropic standoff began.

The QuitGPT campaign, which claims 1.5 million people have taken action against ChatGPT, organized a protest outside OpenAI's San Francisco headquarters on March 3.

Altman himself acknowledged the employee sentiment. In his X post admitting the Pentagon deal was rushed, he said he was surprised by how many critics "seemed to have more faith in unelected tech executives making decisions about the appropriate use of AI rather than government officials."

Why this matters for the tools you use every day

If you use ChatGPT, Claude, or Gemini professionally, the people who build those tools are now publicly fighting over how they get used.

That fight is not abstract. It affects product decisions. It affects what safeguards stay in the tools and which ones get removed. It affects whether the company you trust with your data and workflows prioritizes revenue or principles when those two things collide.

The employee coalition is significant because it creates accountability from the inside. Executives at Google and OpenAI cannot dismiss this as external noise. These are their own engineers, researchers, and alignment specialists.

Anthropic drew a line. Its employees did not need to organize because leadership already took the position. At Google and OpenAI, the employees are drawing the line themselves.

What happens next depends on whether these companies listen to their own people. And whether users reward or punish the decisions they make.